Additive zoom level decomposition for e-profile Part IV

In the former articles of this series we described our strategy to find optimal zoom levels from arbitrary data. The idea is to avoiding that two points in same zoom level be too close together. Our first approach failed as we tested it with real data. We had missed a detail that we describe in this article. Also we give a solution to this problem.

Additive zoom level decomposition for e-profile Part IV

At first I like to show you the case that goes wrong with the former algorithm.

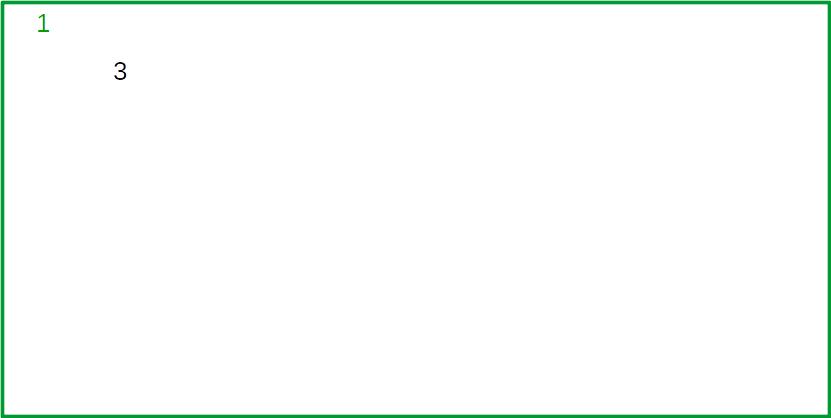

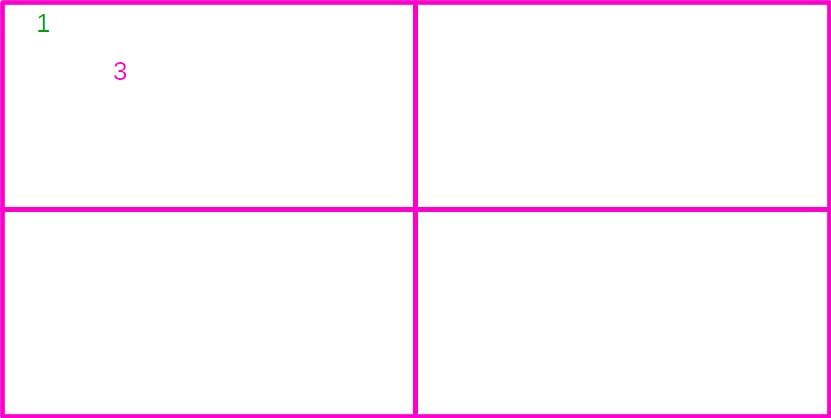

We have two points 1 and 3 that are quite near to each other. The first Point 1 is assigned to the green region.

Sice the green region is now full, for point 3 a subdivision has to take place. Point 3 is then assigned to the pink NW quadrant where it belongs.

If we now plot green level and pink level we have two points closely together wich we would have seperated from each other.

So how do we aviod this? And how do we not break the complete algorithm we have optimized with so much efford?

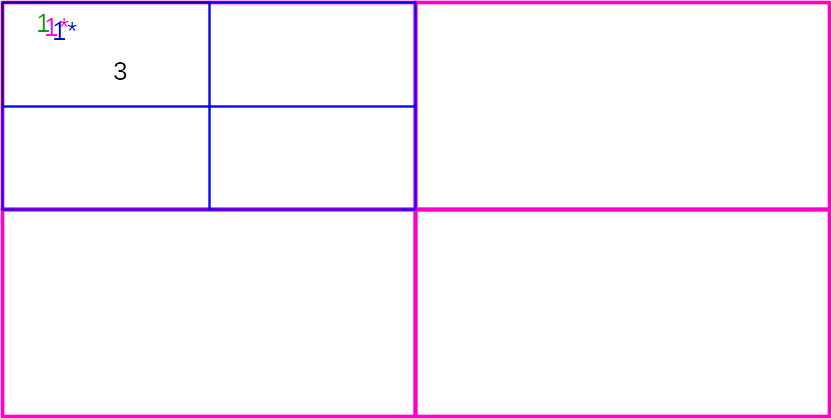

The idea is quite simple: We have to hinder point 3 to ocupy the NW pink cell, since there point 1 already resides. Therefore we occupy this cell with a proxy 1* of point 1 before we try to assign point 3 to the subdivisions .

When we now try to assign point 3 to the pink NW quadrant the quadrant is already occupied and has to be subdived. and we also add a proxy point 1 to the blue NW quadrant since the proxy of point 1 belongs to it.

Again point 3 find finds an already occupied blue NW quadrant and urges for a subdivision.

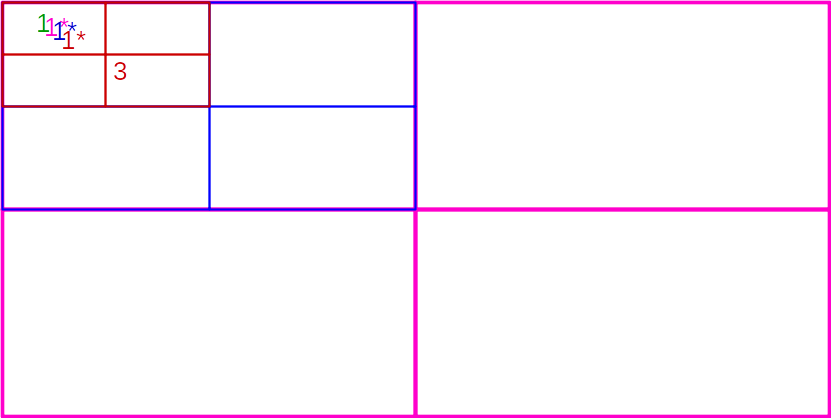

Again a proxy of the proxy of the proxy of point 1 is assigned to the red NW quadrant. But now point 3 belongs to the red SE quadrant which is empty and can be occupied.

Point 3 is now three zoom levels "distant" to point 1.

One can easily derive the following rule: The closer two points are the farer the get in terms of zoom levels. And at the end of the day this is what zoom level decomposition likes to achieve.

The attentive reader may have noticed that the new algorithm does not talk about the optimization from part III of this series which should avoid an other problem with random near points.

And you are right. I dropped this optimization because

- it interacts badly with the new algorithm.

- the outcome without this optimization is better than expected.

And what about the performance, you will wonder?

| Number points | Proc w. OOP wrapper | New proc | |

| Runtime (ms) | 100000 | 115 | 122 |

| 1000000 | 1380 | 1625 | |

| BaseMemory (MB) | 100000 | 84.6 | 84 |

| 1000000 | 99.2 | 98.4 | |

| Memory total | 100000 | 100.9 | 109.3 |

| 1000000 | 272.9 | 361.1 |

The differences in runtime are within the errror margins. But the memory consumption is a bit higher (due to the blocked nodes and need for more decomposition). For 1Mio. we need 30% more memory.

Here an animation of the new quadtree algorithm for realtime zoom of windbarbs of https://e-profile.eu.